Introduction

Most hiring leaders believe AI will make recruitment fairer—yet the evidence tells a more complicated story. AI can just as easily entrench bias as eliminate it, depending entirely on how these systems are designed and deployed. The critical question isn't whether AI reduces bias, but under what specific conditions it actually delivers on that promise.

Traditional hiring has well-documented bias problems: recruiters spend an average of just 7.4 to 11.2 seconds scanning resumes, decision fatigue triggers flawed mental shortcuts, and unstructured interviews let unconscious preferences drive outcomes.

AI offers a real path forward through standardized evaluation. But that only holds when the technology is properly designed, regularly audited, and paired with meaningful human oversight.

What follows breaks down where bias enters the process, how well-built AI can address it, where poorly designed tools backfire, and what a responsible implementation actually looks like in practice.

TLDR

- AI reduces hiring bias by standardizing evaluations, removing demographic signals, and applying objective scoring — only when purpose-built for fairness

- AI trained on biased historical data reproduces and amplifies existing inequities rather than fixing them

- Bias reduction requires clean training data, defined success criteria, human oversight, and continuous outcome monitoring

- Pair AI assessments with bias awareness training, diverse hiring panels, and skills-based job descriptions for best results

The Hidden Cost of Bias in Traditional Hiring

Human hiring decisions are distorted by predictable cognitive biases that operate largely outside conscious awareness. Affinity bias drives evaluators to favor candidates who resemble themselves culturally or demographically, reinforcing homogeneity. Confirmation bias leads interviewers to seek information validating their initial impressions rather than testing them objectively. Recency bias causes evaluators to over-weight the last candidates interviewed, with research showing evaluators are up to 40% less likely to favor a candidate after having favored the previous one.

These biases aren't deliberate. They're the predictable result of cognitive overload in high-volume hiring environments—and they carry a measurable financial cost.

Companies in the top quartile for gender and ethnic diversity on executive teams are 39% more likely to outperform financially compared to bottom-quartile peers. Homogeneous teams consistently underperform on innovation, problem-solving, and revenue metrics. Bias reduction, in other words, is a core business strategy.

Traditional hiring structures make bias nearly impossible to catch because they lack consistent evaluation frameworks:

- Unstructured interviews allow each interviewer to ask different questions and apply different standards

- Resume screening at scale forces recruiters to eliminate candidates in seconds based on superficial proxies

- Gut-feel decisions provide no documentation or accountability for why candidates were rejected

- Inconsistent rubrics mean every evaluator is applying subjective, variable criteria

When there's no shared standard, there's no way to know where bias entered the process—or how much it's costing you.

How AI Can Reduce Bias in Hiring—When Designed Right

AI's primary bias-reduction mechanism is standardization: every candidate is assessed against identical criteria, in the same format, with the same scoring model. This eliminates the evaluator-to-evaluator variability that allows unconscious bias to influence outcomes.

Skills-Based Assessment Over Credential Proxies

Thoughtfully designed AI shifts focus from proxies for talent—prestigious university names, employer brand recognition, employment gaps—to demonstrated competency. Instead of inferring ability from where someone went to school, AI evaluates what candidates can actually do through:

- Coding assessments that test real-world problem-solving across multiple programming languages

- Situational judgment tasks measuring decision-making in role-relevant scenarios

- Structured response scoring for communication and analytical skills, applied consistently across all candidates

Research demonstrates the impact: when organizations adopted skills-based, anonymized hiring processes, women accounted for 52% of successful senior hires—a 68% increase over the global average of 31%.

Blind Screening Eliminates Demographic Triggers

AI can be configured to redact names, photos, addresses, and graduation years before evaluation. This strips out the visual and contextual cues that trigger affinity and racial bias within seconds of a human viewing a resume—preventing snap judgments based on demographic signals rather than qualifications.

Consistent Evaluation at Scale Without Fatigue

Human recruiters under time pressure resort to heuristics that encode bias. When processing hundreds of applications, decision fatigue inhibits reasoning ability and makes individuals more susceptible to cognitive shortcuts. AI applies the same rigorous criteria to every applicant in the pipeline without fatigue, inconsistency, or the cognitive overload that leads to poor judgment calls.

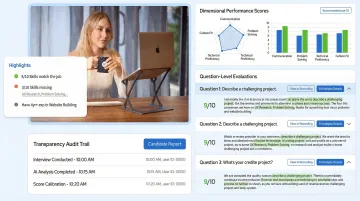

That consistency is where purpose-built platforms make a tangible difference. AltHire AI's interview agents conduct adaptive, structured interviews that assess skills, cultural fit, and potential using objective scoring. Recruiters define the evaluation criteria—technical proficiency, communication, problem-solving—before screening begins, and every candidate moves through the same 360° performance framework. Detailed reports deliver dimensional scores, question-by-question analysis, and complete transcripts, giving hiring teams documented evidence instead of gut reactions.

The Risk: When AI Mirrors and Amplifies Human Bias

AI learns from historical data—and if past hiring decisions were biased, the algorithm treats those discriminatory patterns as markers of "success." The most notorious example is Amazon's 2018 AI recruiting tool, which penalized resumes containing the word "women's" because it was trained on a decade of male-dominated hire data. The system taught itself that male candidates were preferable, disadvantaging qualified women.

The Aura of Neutrality Makes Bias Harder to Challenge

Because AI decisions appear data-driven and objective, they're harder to question than a manager's subjective judgment. A 2025 University of Washington study found that when AI made biased recommendations, human reviewers mirrored those biases up to 90% of the time in severe cases—even when the bias was visible. This "automation bias" means flawed AI corrupts human judgment alongside its own.

How Bias Enters AI Systems

Bias infiltrates AI hiring tools at multiple points:

- Historical promotion data trains models to replicate past discrimination as a success signal

- Defining "high potential" using prior promotion history bakes existing inequities into the model

- Algorithms weight factors correlated with protected characteristics without capturing the causal relationship

- How results are surfaced to decision-makers can amplify or soften the underlying algorithmic bias

Documented Harm to Specific Groups

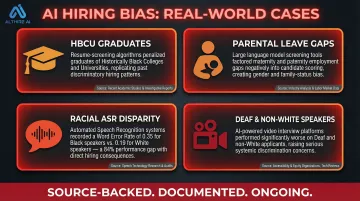

Research documents specific, measurable harm across candidate groups:

- HBCU graduates were penalized because algorithms learned that historically Black college candidates had lower "success rates"—a direct artifact of past discrimination, not candidate quality

- LLMs erroneously factored maternity and paternity gaps into job classification, disproportionately screening out mothers

- Commercial ASR systems showed substantial racial disparities, with word error rates of 0.35 for Black speakers versus 0.19 for White speakers

- A 2025 ACLU complaint alleged video interview platforms performed worse for deaf and non-White speakers, raising discrimination concerns

How to Choose and Implement AI Hiring Tools That Work

Interrogate Training Data Before Deployment

Ask vendors directly:

- What data was the model trained on?

- What outcome was it optimized to predict?

- Has it been audited for disparate impact across race, gender, age, and disability?

If a vendor cannot answer these questions clearly and specifically, that is itself a red flag. Any reputable vendor should be able to walk you through their training data sources and validation process without hesitation.

Define Success Criteria Before Screening Begins

Implement structured, criteria-first evaluation: spell out competencies, skill benchmarks, and culture indicators before any AI screening begins. This prevents the algorithm from reverse-engineering a profile based on who was hired historically, anchoring evaluation to future role needs rather than past hiring patterns.

Mandate Continuous Monitoring, Not One-Time Setup

Regularly audit AI-generated shortlists to check whether candidates from different demographic groups are being screened at equal rates. A one-time bias audit at launch is insufficient. Bias can drift as data shifts, candidate pools change, or model updates introduce new patterns. EEOC guidance emphasizes ongoing self-auditing rather than point-in-time compliance checks.

Preserve Meaningful Human Oversight

AI should surface and rank candidates, but humans must make the final call with full awareness of the AI's limitations. Oversight isn't passive — it requires active familiarity with how the tool works and where it can fail.

A University of Washington study found that completing an Implicit Association Test before screening reduced AI-mirrored bias by 13%. That result shows bias awareness training, when paired with AI interaction, meaningfully reduces automation bias rather than just acknowledging it.

Look for Transparency and Explainability

Effective oversight depends on what your platform actually surfaces. Purpose-built tools should give reviewers enough information to understand and challenge AI decisions, not just accept them:

- Customizable scoring rubrics tied to defined competencies

- Blind-screening options that redact demographic signals before review

- Explainable outputs showing why each candidate was ranked a certain way

- Reporting that tracks selection rates across demographic groups for ongoing audits

AltHire AI is built around this standard: its AI-proctored interviews generate dimensional performance scores, question-level evaluations, and full video recordings, giving hiring teams the audit trail needed for both confident decisions and compliance accountability.

What HR Teams Can Do Alongside AI

Adopt Skills-Based Job Descriptions

Many bias problems begin before any AI touches the process. Vague or credential-heavy job descriptions filter out qualified candidates before they even apply.

Define role requirements in terms of demonstrable skills and outcomes rather than proxies like degree requirements or years of experience. Remove credential barriers that often screen out candidates from underrepresented groups without improving hire quality.

Train Interviewers on Implicit Bias and AI Limitations

The UW study demonstrated that bias awareness before a hiring task reduced the degree to which humans mirrored AI bias. Regular education on how AI systems can fail—and how human biases operate—reduces the risk that humans rubber-stamp flawed AI recommendations.

That said, training alone won't hold. Pair it with concrete guardrails:

- Structured evaluation rubrics applied consistently across candidates

- Documented scoring criteria reviewed before each hiring decision

- Ongoing monitoring of AI outputs for demographic disparities

Create Structured Interview Panels with Diverse Representation

When final decisions involve multiple evaluators from different backgrounds assessing against a shared rubric, the likelihood that any single evaluator's bias determines the outcome is significantly reduced. Structured interviews using standardized questions and anchored rating scales are twice as valid as unstructured interviews and substantially reduce bias.

Frequently Asked Questions

Does AI reduce bias in hiring?

AI can reduce bias when specifically designed to standardize evaluation criteria, apply objective scoring, and remove demographic signals. Poorly designed AI trained on biased historical data, however, can entrench or amplify existing inequities — the outcome depends entirely on system design, data quality, and human oversight.

How to reduce bias in AI hiring?

Audit the training data and model outputs for disparate impact, define success criteria based on skills before screening begins, and maintain continuous human oversight with regular fairness monitoring. Treat AI as a tool requiring active management, not a set-and-forget solution.

What actions can HR take to minimize bias in recruitment and hiring practices?

Practical steps include:

- Rewrite job descriptions around competencies rather than credentials

- Use structured interview rubrics applied consistently across all candidates

- Pair implicit bias training with systemic safeguards

- Build diverse hiring panels to distribute decision-making across multiple perspectives

What types of bias affect the traditional hiring process?

Affinity bias (favoring culturally similar candidates), confirmation bias (over-weighting early impressions), recency bias (favoring recently interviewed candidates), and attribution bias (judging identical behaviors differently depending on a candidate's background) are the most common forms distorting human hiring decisions.

Can AI hiring tools be held legally accountable for discriminatory outcomes?

New York City's Local Law 144 requires bias audits of automated employment decision tools, and the EU AI Act classifies HR AI as "high-risk." Employers remain legally liable for discriminatory outcomes even when an AI system made the recommendation. Vendor transparency and internal auditing are not optional — they're a legal safeguard.

What is structured interviewing and why does it reduce bias?

Structured interviewing means asking every candidate the same predetermined questions and scoring responses against the same criteria. It eliminates the improvised, relationship-driven dynamics where bias most easily enters — replacing gut-feel evaluation with consistent, rubric-based scoring.