Introduction

Resumes with White-sounding names receive 50% more callbacks for interviews than identical resumes with African-American-sounding names—a statistic from Bertrand and Mullainathan's landmark 2004 study that remains as relevant today as it was two decades ago. This bias surfaces before a single conversation takes place, filtering out qualified candidates based solely on perceived identity markers like name, address, or graduation year.

The business consequences extend well beyond missed talent. Biased hiring drives turnover costs estimated at 90-200% of an employee's annual salary, shrinks team diversity, and creates real legal exposure—the EEOC recovered nearly $700 million for discrimination victims in FY2024 alone.

Early-stage screening disparities compound over time, producing homogenous teams and self-reinforcing referral networks that make the problem harder to fix with each hiring cycle.

Hiring bias is rarely intentional. It operates through cognitive shortcuts below conscious awareness, which is exactly what makes it so persistent. Reducing it takes deliberate process changes at specific decision points—not just good intentions.

TL;DR

- Bias enters through job descriptions, resume screening, and unstructured interviews, not just overt discrimination

- Blind hiring and standardized interviews are among the most evidence-backed interventions

- Bias training raises awareness but must be paired with structural process changes

- Structured AI interviews enforce consistent questioning and scoring across every candidate

- Tracking pipeline diversity metrics turns bias reduction into an ongoing practice

Why Hiring Bias Costs More Than One Bad Hire

The financial damage from biased hiring is substantial and measurable. SHRM estimates that employee turnover costs organizations 90-200% of an employee's annual salary when accounting for direct replacement expenses and indirect productivity losses. For a mid-level employee earning $60,000, that translates to $54,000–$120,000 per departure.

Legal exposure adds another layer of risk. The EEOC recovered almost $700 million for discrimination victims in FY2024, with new charges reaching 88,531 cases. These figures represent only formal complaints — the true cost includes settlements, legal fees, and reputational damage that rarely surfaces in public records.

Those financial and legal costs don't stay isolated — they compound. Biased early hiring shapes team composition, which determines who gets referred, promoted, and retained.

Early sorting at the callback stage creates a self-reinforcing cycle where existing demographics shape future hires. Over time, this becomes an organizational problem that's progressively harder to correct the longer it goes unaddressed.

Where Bias Enters the Hiring Funnel

Bias isn't a single event—it's a series of decision points where cognitive shortcuts influence judgment. Understanding where these vulnerabilities exist helps organizations design targeted interventions.

Common bias types and their typical entry points:

- Affinity bias: Surfaces during unstructured interviews and informal conversations, where shared backgrounds drive warmer evaluations

- Halo/horn effect: Appears in resume screening when a prestigious school or brand-name employer overshadows actual qualifications

- Confirmation bias: Dominates interview stages — interviewers often form a judgment within five minutes, then spend the rest of the conversation looking for evidence to confirm it

- Attribution bias: Shapes how interviewers read confidence, assertiveness, or communication style differently based on a candidate's identity

Interviewer-applicant similarity consistently produces more favorable evaluations, even when qualifications are identical. Masculine-coded language like "dominant" or "competitive" in job descriptions also reduces appeal to women — not because of perceived skill gaps, but because candidates anticipate not belonging.

These aren't character flaws — they're cognitive shortcuts that operate below conscious awareness. That's why awareness training alone rarely moves the needle. The seven strategies below focus on structural fixes: removing the conditions that let bias take hold in the first place.

7 Practical Ways to Reduce Bias in Your Hiring Process

Each strategy targets a specific stage of the hiring funnel where bias is most likely to surface. The most effective approach layers multiple strategies rather than relying on any single fix.

Rewrite Job Descriptions with Inclusive Language

Biased job descriptions narrow the applicant pool before a single resume is reviewed. Gaucher, Friesen, and Kay's 2011 research found that masculine-coded language reduces job appeal to women by lowering their sense of anticipated belongingness—women infer lower gender diversity and feel they wouldn't fit in.

Practical steps:

- Replace gendered words (dominant, nurturing, aggressive, supportive) with neutral alternatives

- Split qualifications into required vs. preferred to avoid credential inflation

- Remove unnecessary degree requirements that filter out skilled workers—analysis from the Burning Glass Institute found that non-degreed candidates hired into roles that dropped degree requirements had 10 percentage points higher two-year retention than college-educated colleagues

- Use free tools that flag coded language automatically

The result: a broader, more qualified applicant pool at zero cost.

Implement Blind Resume Screening

Blind hiring removes personally identifiable information—name, address, photo, graduation year, affiliated organizations—from resumes before review. This forces evaluators to assess qualifications on merit rather than unconscious associations.

The same research showing 50% higher callbacks for White-sounding names demonstrates blind screening's effectiveness. Orchestra blind auditions (Goldin & Rouse, 2000) significantly increased the probability of female musicians being hired when identity was concealed.

Implementation options:

- Manual: Designate a team member to redact identifying information before distribution

- Automated: Use purpose-built software that strips metadata and reformats resumes

Critical limitation: Bias can re-enter once identity is revealed. Harvard Business Review (2017) found that while blind screening helped more minority candidates get interviews, they weren't ultimately hired at higher rates—bias simply shifted to the interview stage. This makes combining blind screening with structured interviews essential.

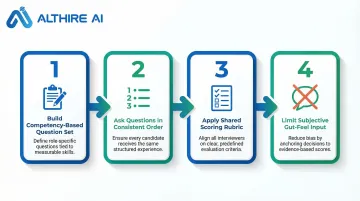

Standardize Interview Questions Across All Candidates

Unstructured interviews are one of the highest-risk stages for bias. When interviewers ask different questions to different candidates, fair comparison becomes impossible. Schmidt and Oh's 2016 meta-analysis found structured interviews have significantly higher validity (.58) for predicting job performance compared to unstructured interviews (.46).

How to standardize:

- Build a consistent question set tied directly to job-relevant competencies

- Ask questions in the same order for all candidates

- Use a shared scorecard so all interviewers evaluate on the same rubric

- Limit opportunities for subjective "gut feelings" to influence outcomes

Job-relevant criteria, consistent sequencing, and shared rubrics collectively limit affinity bias, halo effects, and other cognitive shortcuts that contaminate unstructured evaluations.

Build a Diverse Interview Panel

A single interviewer's perspective creates a single point of bias. A panel drawn from different departments, seniority levels, and backgrounds introduces counterbalancing viewpoints and reduces the influence of any one person's preferences.

Research by Dobbin and Kalev shows that diverse panels reduce individual "like-me" bias and mitigate cultural matching by creating social accountability among evaluators. When multiple perspectives are required to reach consensus, extreme or biased positions are moderated through group discussion.

Worth noting for recruiting outcomes: A diverse panel also signals inclusivity to candidates — improving employer brand perception and increasing offer acceptance rates among top talent.

Train Hiring Teams on Unconscious Bias

Training is necessary but not sufficient on its own. It reliably increases short-term awareness of bias and concern about its effects immediately following intervention. However, Forscher et al.'s 2019 meta-analysis found a small correlation (r ≈ .09) between changes in implicit measures and changes in actual behavior, indicating a significant gap between changing attitudes and changing actions.

Effective training characteristics:

- Recurring sessions rather than one-time modules

- Scenario-based exercises reflecting real hiring situations

- Integration with structural changes like diversity task forces and standardized evaluation processes

- Managerial oversight and accountability mechanisms

Training raises awareness and helps interviewers recognize patterns like affinity bias or the halo effect, but must be paired with structural guardrails that prevent bias from influencing decisions even when awareness lapses.

Use AI-Powered Tools to Reduce Evaluator Subjectivity

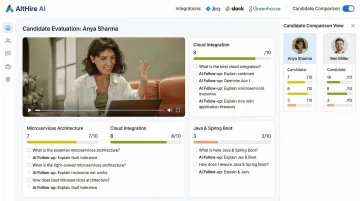

AI-driven interview platforms remove inconsistency at scale. By asking all candidates the same adaptive questions, scoring responses against predefined competency rubrics, and generating objective reports, these tools reduce the evaluator subjectivity that leads to bias.

A 2026 validation study published in JMIR Medical Education found that AI-based assessments demonstrated substantially higher inter-rater reliability (ICC of 0.77-0.82) compared to just 0.38 for human raters, with internal consistency also higher and scoring variation roughly half that of human evaluators.

How it works in practice:

AltHire AI conducts structured, AI-powered interviews with consistent scoring across all candidates. The platform asks role-specific questions based on job descriptions, adapts follow-up questions to each response while maintaining evaluation consistency, and generates detailed performance reports that eliminate the variability bias exploits.

- Integrates with 20+ ATS platforms including Greenhouse, Lever, and Workable

- Candidate data and scores flow automatically — no manual re-entry that could reintroduce bias

Critical consideration: AI systems must be trained on diverse, representative data to avoid encoding existing biases. The EU AI Act classifies AI used for recruitment as "high-risk," triggering rigorous testing requirements, human oversight mandates, and transparency obligations. The EEOC has affirmed that existing anti-discrimination laws apply to AI, meaning employers remain liable for discriminatory outcomes.

Set Diversity Goals and Track Pipeline Metrics

Without measurement, bias goes undetected at every stage. Dobbin and Kalev's research found that companies establishing accountability structures like diversity task forces saw 9-30% increases in representation of white women and each minority group in management over five years.

What to track:

- Applicant demographics at each funnel stage: applicants → screened → interviewed → offered → hired

- Offer acceptance rates by demographic group

- Source-of-hire diversity (which channels produce diverse candidates)

- Time-to-hire by demographic group

Analysis approach:

Use the four-fifths (80%) rule as a guideline: if the selection rate for any group is less than 80% of the rate for the highest-performing group, adverse impact may exist. Conduct these determinations at least annually, using two-standard-deviation tests for statistical significance.

Tracking this data surfaces exactly where diverse candidates drop out and gives leadership a concrete basis for corrective action. Only 26% of organizations currently analyze hiring outcomes by race/ethnicity (Paradigm, 2023) — making it a genuine competitive advantage for those who do.

How to Measure and Sustain Bias-Free Hiring Over Time

Bias reduction is not a project with an end date—it requires regular auditing of hiring outcomes and a feedback loop that translates findings into process adjustments.

Establish a review cadence:

- Monthly or quarterly analysis of recruitment data

- Examination of offer rates by demographic group

- Review of interviewer scoring patterns to identify inconsistencies

- Analysis of source-of-hire diversity to optimize recruiting channels

Candidate feedback matters too. Candidates who went through your process can point out friction that internal reviewers miss. Exit surveys and candidate experience data surface those gaps directly.

Building accountability into the process is what separates sustained progress from one-time audits. Concrete steps include:

- Assigning specific owners to diversity outcome metrics (not just HR)

- Publishing hiring funnel data internally on a set schedule

- Requiring managers to respond to demographic disparities in their pipelines

- Tying diversity metrics to team-level performance reviews

When accountability has a name attached and numbers that update regularly, hiring teams treat it as operational — not aspirational.

Conclusion

Bias doesn't require bad intent to do damage. It enters where structure is absent, operating through cognitive shortcuts that run below conscious awareness regardless of values or training. The solution is process design, not attitude adjustment.

The seven strategies work best as a system, with each one closing a different gap:

- Rewriting job descriptions expands your applicant pool by removing exclusionary language

- Blind screening ensures initial review is based on merit, not identity signals

- Standardized interviews create consistent, comparable evaluations across candidates

- Diverse panels introduce counterbalancing perspectives that offset individual blind spots

- Bias training raises awareness of the shortcuts that distort judgment

- AI tools enforce consistency at scale across every interaction

- Tracking metrics surfaces patterns, drives accountability, and shows where gaps remain

Together, these practices shift hiring from a process shaped by familiarity to one shaped by fit. That shift produces better hires, more diverse teams, and a defensible process — without slowing you down.

Frequently Asked Questions

How can you reduce bias in the hiring process?

Reduce bias through structural changes: rewrite job descriptions to remove gendered language, implement blind resume screening, use standardized interview questions with shared scorecards, build diverse interview panels, and track pipeline metrics at every funnel stage. Training raises awareness but must be paired with these process-level interventions.

What are the 5 C's of interviewing?

The 5 C's framework evaluates Competency, Character, Communication, Culture fit, and Coachability. Structured interviews with job-relevant questions and objective scoring rubrics help assess each dimension consistently across all candidates.

What is unconscious bias in hiring?

Unconscious bias refers to automatic, unintentional preferences that shape hiring decisions—such as affinity bias (favoring candidates similar to yourself) or the halo effect (letting one positive trait color an entire evaluation). Because these biases operate below conscious awareness, process-level interventions matter far more than individual vigilance alone.

Does blind hiring actually work?

Blind hiring demonstrably increases callbacks for underrepresented candidates. Bertrand and Mullainathan's audit study found identical resumes with White-sounding names received 50% more callbacks than those with African-American-sounding names. Blind screening delivers the strongest results when paired with structured interviews — otherwise, bias tends to re-enter at the face-to-face stage.

How do structured interviews reduce bias?

Structured interviews use identical, job-relevant questions for all candidates and a shared scoring rubric. This approach reduces the influence of irrelevant factors — appearance, accent, personal rapport — on hiring decisions. Schmidt and Oh's meta-analysis put the difference in concrete terms: structured interviews predict job performance with a validity of .58 versus .46 for unstructured conversations.

What role does AI play in reducing hiring bias?

AI-powered interview tools enforce consistent questioning and scoring across every candidate, eliminating the variability that lets bias accumulate over time. That said, AI systems trained on non-representative data can encode the same biases they're meant to prevent — which is why the EU AI Act classifies recruitment AI as "high-risk," requiring rigorous testing and human oversight before deployment.