Introduction

The interview is the most critical stage in any hiring process—yet most hiring teams treat it as a conversation rather than a structured assessment. That gap leads to inconsistent, bias-prone decisions.

Research shows that structured interviews are significantly more predictive of job performance than unstructured ones, with validity coefficients (a measure of predictive accuracy) of .51 versus .38 respectively. Despite this evidence, many organizations still rely on improvised questions and subjective impressions.

That improvisation has real costs. Structuring interview questions seems straightforward, but results vary widely based on question type, sequence, competency mapping, and how responses are evaluated. Without a systematic approach, even well-intentioned interviewers introduce bias, ask legally problematic questions, or fail to gather comparable data across candidates.

This guide walks through exactly how to build a structured interview process that produces consistent, defensible hiring decisions.

TL;DR

- Structured interviews use pre-planned, job-relevant questions asked consistently across all candidates to reduce bias and improve hiring accuracy

- Start with a job analysis to identify key competencies, then map specific question types to each one

- Six question types cover every stage: warm-up, verification, behavioral, situational, skills-based, and culture alignment

- Scoring rubrics are essential — without them, well-structured questions still lead to subjective decisions

- Top pitfalls: skipping job analysis, varying questions per candidate, and untrained interviewers

What Is a Structured Interview and Why Does Question Structure Matter?

A structured interview is a pre-designed process where all candidates are asked the same job-relevant questions in the same order, evaluated against the same scoring criteria. This contrasts sharply with unstructured interviews, where interviewers ask whatever comes to mind based on gut feel or conversation flow. The difference between these two approaches shows up directly in hiring outcomes.

The Predictive Validity Gap

Meta-analytic research demonstrates that structured interviews achieve a validity coefficient of .51, while unstructured interviews reach only .38 — a meaningful gap when you're trying to predict who will actually perform on the job.

The advantage compounds with better scoring tools. When combined with Behaviorally Anchored Rating Scales (BARS), criterion-related validity increases to .35 compared to .26 without scales.

Legal Defensibility and Compliance

Question structure has direct legal consequences, not just fairness implications. The Uniform Guidelines on Employee Selection Procedures (UGESP) establish that any selection procedure with adverse impact must be validated through formal job analysis. Asking inconsistent or inappropriate questions exposes organizations to compliance risks.

Prohibited question categories include:

- Age, marital status, or family planning

- Religion or national origin

- Disability-related questions or medical examinations pre-offer

- Race, ethnicity, or language spoken at home

Structured interviews built on job analysis ensure every question has a clear, justifiable purpose tied to actual role requirements, protecting your organization from disparate treatment claims.

How to Structure Interview Questions for Better Hiring

Step 1: Conduct a Job Analysis to Identify Key Competencies

Review the job description and identify the critical knowledge, skills, abilities, and behaviors (KSAs) required for success in the role. Common competencies include:

- Problem-solving and analytical thinking

- Communication and interpersonal skills

- Teamwork and collaboration

- Technical or role-specific skills

- Self-management and adaptability

Questions built without a job analysis are likely to be generic and legally indefensible. The U.S. Office of Personnel Management (OPM) recommends assessing exactly 4 to 6 competencies per structured interview to ensure sufficient depth while maintaining focus.

Step 2: Map One or More Questions to Each Competency

For each identified competency, plan at least one question that directly assesses it. Avoid doubling up on the same competency while leaving others unassessed.

Prioritization approach:

- Determine which competencies are "must-have" versus "nice-to-have"

- Assign more questions to critical competencies

- Keep total question count realistic for a 45-minute interview (typically 5–8 behavioral questions plus opening and closing)

Platforms like AltHire AI enable teams to build standardized question sets mapped to specific competencies, with the AI automatically generating role-specific questions based on job descriptions and evaluation criteria.

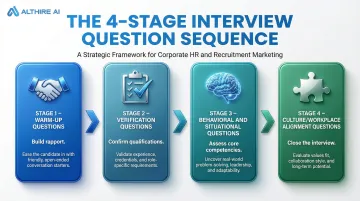

Step 3: Sequence Questions Intentionally

The recommended flow progresses from lower-stakes to higher-stakes questions:

- Warm-up questions to build rapport

- Verification questions to confirm qualifications

- Behavioral and situational questions to assess core competencies

- Culture/workplace alignment questions to close

This sequencing helps candidates settle into the interview and produce more honest, detailed responses. Jumping immediately into high-pressure questions can trigger anxiety that obscures a candidate's true capabilities.

Step 4: Apply the STAR Format to Frame Behavioral and Situational Questions

The STAR structure (Situation/Task, Action, Result) is the standard framework for writing behavioral questions. Questions should prompt candidates to describe a real past experience with enough specificity to evaluate their actual actions and outcomes.

Writing STAR-compliant questions:

- Use superlative prompts: "Tell me about the most challenging..." or "Describe the last time you had to..."

- Prepare follow-up probes: "What specifically did you do?" / "What was the outcome?"

- Establish standardized probing ranges to ensure all candidates receive equal opportunity to elaborate

Example: "Tell me about a time you had to manage a difficult stakeholder relationship—what was the situation, what did you do, and what was the result?"

Step 5: Build Scoring Rubrics Before the Interview

A scoring rubric is a rating scale (typically 1–5) anchored with behavioral descriptions of what a low, average, and outstanding response looks like for each question. This converts subjective impressions into comparable, defensible data.

Critical requirements:

- Develop rubrics alongside questions, not after

- Include specific behavioral examples at each rating level

- Convene subject matter experts to reach consensus on anchor definitions

A meta-analysis by Levashina et al. (2014) found that BARS increase inter-rater reliability to .77 (up from .73 without scales) and significantly reduce demographic and disability bias by forcing interviewers to focus on objective, predefined behavioral standards.

Types of Structured Interview Questions You Should Know

Structured interviews draw from several question types, each targeting a different aspect of how a candidate thinks, works, and fits the role. Using the right mix gives you a fuller, more reliable picture than any single approach can provide.

Warm-Up Questions

These open the interview, ease initial anxiety, and establish rapport before the substantive questions begin. A simple prompt like "Can you briefly walk me through your background and your current role?" gives candidates a low-stakes way to settle in — and helps them perform at their best when it matters.

Verification Questions

Used in early screening stages, these confirm whether a candidate meets baseline qualifications. They're typically yes/no or short-answer: "Have you managed a distributed team before?" or "Do you have experience with Python and machine learning frameworks?" Quick to ask, quick to score, and effective at filtering before deeper evaluation begins.

Behavioral Questions

Structured using the STAR format (Situation, Task, Action, Result), behavioral questions assess how a candidate has acted in the past as a predictor of future performance. Example: "Tell me about a time you had to manage a difficult stakeholder relationship — what did you do and what was the result?"

Meta-analytic research shows past behavior questions paired with structured scoring criteria achieve a mean validity of .63 — one of the strongest predictors in interviewing research.

Situational Questions

These present a hypothetical scenario relevant to the role, evaluating judgment and problem-solving before a candidate has experienced the situation. Example: "How would you handle a situation where your team missed a key deadline due to conflicting priorities?"

Ask candidates to walk through their reasoning step by step — it reveals how they think, not just what they'd do.

Skills-Based / Technical Questions

These verify role-specific technical competency, sometimes through a task or work sample rather than a verbal question alone. For a writing role, that might mean a short assignment mirroring actual job tasks. For a developer, it could be solving a real coding problem during the interview. Work samples consistently outperform self-reported skill assessments for accuracy.

Culture and Workplace Alignment Questions

These assess whether a candidate's working style, values, and preferences align with the team. A strong example: "What type of environment brings out your best work, and how does that compare to what you know about how we operate here?"

Use with caution. SHRM research shows that vague "culture fit" assessments trigger affinity bias and unconscious discrimination — interviewers evaluating minority candidates frequently shift from evaluating job fit to culture fit, undermining validity.

Reframe these as behaviorally anchored "values alignment" questions tied to specific, job-relevant competencies. That keeps the intent intact while keeping the process defensible.

Key Variables That Affect Structured Interview Outcomes

Even well-written questions produce inconsistent results when key execution variables are not controlled. The four variables below determine whether your structured interview process actually delivers comparable, defensible candidate data.

Question Clarity and Job-Relevance

Questions must be free of jargon, at an appropriate comprehension level, and clearly tied to real job requirements. Vague questions produce vague answers that are difficult to score.

Best practice: Have a peer or job incumbent review questions before finalizing them to ensure clarity and relevance.

Interviewer Consistency

All candidates for the same role must be asked the same questions in the same order. Deviation introduces bias and makes comparative evaluation unreliable.

Implementation requirements:

- Brief interviewers in advance

- Provide the question guide, probes, and rating scales

- Enforce adherence to the standardized protocol

Scoring Rubric Quality

The granularity of the rubric determines how objectively responses can be compared. Generic rubrics (e.g., "good answer / bad answer") produce the same subjectivity as no rubric.

Well-anchored rubrics with behavioral examples at each rating level produce measurably higher inter-rater reliability. A rubric for "problem-solving," for instance, might define:

- 1 (Poor): Describes problem vaguely; no clear action taken

- 3 (Average): Identifies problem and takes standard corrective action

- 5 (Excellent): Identifies root cause, implements innovative solution, measures impact

Interviewer Training

A strong rubric only works if interviewers know how to apply it. Untrained interviewers may fail to probe effectively, unconsciously favor certain candidate profiles, or score the same response differently.

OPM mandates that interviewer training cover:

- Identifying job requirements

- Developing questions

- Avoiding common rating errors (halo effect, recency bias)

- Applying rating scales objectively

Before the process begins, all interviewers should also be aligned on:

- Note-taking practices during candidate responses

- When and how to use follow-up probes

- How to apply the rating scale consistently across candidates

Common Mistakes When Structuring Interview Questions

Most hiring teams believe they are conducting structured interviews when they are not. The following mistakes are the most common causes of structure breakdown.

Skipping the Job Analysis

Building questions without first identifying specific competencies produces generic, legally indefensible questions that fail to separate strong candidates from weak ones. It's also the hardest mistake to fix retroactively.

Without a formal job analysis, you can't demonstrate that your selection procedure is job-related and consistent with business necessity — a requirement under UGESP whenever adverse impact occurs.

Writing Questions That Are Too Hypothetical or Too Broad

Purely situational questions without behavioral equivalents allow candidates to give idealized answers that don't reflect actual past behavior. A balanced question set should include both types, with behavioral questions anchored to real experience.

Example of too broad: "How do you handle conflict?" Better: "Tell me about the most difficult conflict you've had with a coworker—what was the situation, what did you do, and what was the result?"

Failing to Standardize Across Interviewers

When different panel members ask different questions or apply different scoring criteria to the same candidate, candidates can't be compared on equal terms — and your hiring decision reflects interviewer variance, not actual performance.

AltHire AI lets teams assign specific questions to specific interviewers within a shared, standardized framework, so every candidate moves through the same evaluation regardless of who's in the room.

Not Scoring Responses Immediately

Memory degrades quickly after interviews. Interviewers who delay scoring often unconsciously revise their assessments based on recency bias or overall impressions rather than specific answers.

Fix this by scheduling 15 minutes of scoring time directly after each session. Complete your rubric-based evaluation while the responses are still specific — before the next candidate's answers start to blur with the last one's.

Frequently Asked Questions

What is an example of a structured interview question?

"Tell me about the most difficult decision you made in a previous role—what was the situation, what did you do, and what was the outcome?" This qualifies as structured because it is competency-linked (decision-making), open-ended, and STAR-formatted to draw out concrete, specific examples.

What are the six types of structured interview questions?

The six types are warm-up, verification, behavioral, situational, skills-based/technical, and culture/alignment questions. Effective interviews typically combine several of these types for a well-rounded evaluation.

What is the difference between structured and unstructured questions?

Structured questions are pre-planned, job-relevant, asked consistently to every candidate, and scored against a rubric. Unstructured questions are improvised and vary by interviewer, which makes fair comparison difficult and introduces bias.

What is structured questioning?

Structured questioning is a deliberate approach to interview design where every question ties to a specific competency and uses formats like STAR to draw out detailed responses. Answers are scored against predetermined criteria so hiring teams can compare candidates fairly and consistently.

How long should a structured interview take?

A well-structured interview typically runs 30–50 minutes, covering 5–8 behavioral questions at roughly 5–10 minutes each, with time reserved at the end for candidate questions and post-interview scoring.

How do you score structured interview responses fairly?

Fair scoring starts with pre-built rubrics that include behavioral anchors at each rating level. Score immediately after the interview before memory fades, and in panel settings, hold a brief consensus discussion to resolve any significant rating gaps before deciding.