Introduction

Studies show interviewers agree on the same candidate only about 50% of the time — yet most hiring teams still rely on gut feel and free-form notes. When multiple candidates are in play, that approach creates noise instead of clarity, and decisions either stall or default to whoever made the strongest first impression.

Structured evaluation scorecards fix this. This guide covers:

- What candidate interview evaluation scorecards are and why they work

- What to include in an effective scorecard

- How to build one step by step

- Ready-to-use templates for common roles

TL;DR

- Interview evaluation scorecards let interviewers rate every candidate against the same criteria, reducing bias and making candidate comparisons more objective

- Include role qualifications, technical skills, soft skills, and a 1–5 rating scale in your scorecard

- Each interviewer should complete their scorecard independently before discussing candidates

- Templates should be role-specific: engineering scorecards differ significantly from sales or leadership versions

- AltHire AI auto-generates structured scorecards from job descriptions — setup takes under 10 minutes

What Is an Interview Evaluation Scorecard (and When Should You Use One)?

An interview evaluation scorecard is a structured document that standardizes how interviewers rate candidates across predefined criteria — transforming subjective impressions into measurable, comparable data points. Instead of relying on gut feelings after a day of back-to-back interviews, scorecards bring structure and consistency to every hiring decision.

When scorecards are most critical:

- High-volume hiring rounds where dozens of candidates are assessed

- Panel interviews with multiple interviewers evaluating the same candidate

- Any role where two or more finalists need objective comparison

- Regulated industries (healthcare, finance) requiring documented hiring decisions

Common situations where teams wrongly skip scorecards:

- Fast-moving startups assuming speed and intuition are enough to identify the right hire

- Small teams hiring for a single role who think structured evaluation is overkill

- Companies filling niche roles and assuming no template will fit — without trying to build one

It's also worth distinguishing two related terms. A scoring matrix is the overarching system — the criteria definitions and rating scale. A scorecard is the individual document each interviewer fills out per candidate. Both work together; neither replaces the other.

What to Include in an Interview Evaluation Scorecard

Role Qualifications Section

List must-have credentials, years of experience, educational requirements, or certifications relevant to the role. These serve as binary pass/fail gates before scoring begins. For example:

- Minimum 5 years in software development

- Bachelor's degree in Computer Science or equivalent

- Active security clearance (if applicable)

Technical Skills Criteria

Define 3–5 measurable technical competencies tied directly to the job description. For a developer role, this might include:

- Coding proficiency in Python and JavaScript

- System design and architecture thinking

- Debugging and troubleshooting ability

- API integration experience

Reserve space for the interviewer to record evidence from the candidate's responses: specific examples or projects that demonstrate each competency.

Technical criteria anchors the scorecard in observable skill. The next layer captures how candidates actually work with others.

Behavioral and Interpersonal Attributes

Include soft skills that matter for the specific role, framed as observable behaviors rather than vague impressions:

- Communication: Can the candidate break down technical concepts for a non-technical audience without oversimplifying?

- Collaboration: Do they cite real cross-functional projects, or speak only about individual contributions?

- Adaptability: Have they described changing course based on feedback or new constraints?

- Initiative: Do their examples show ownership, or do they wait for direction?

Rating Scale Definition

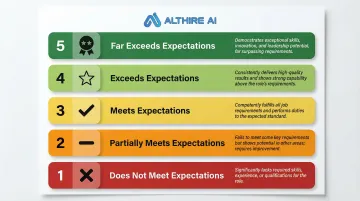

A 1–5 numerical scale is the most common approach. Each number should correspond to a specific performance descriptor:

- 1 = Does not meet expectations

- 2 = Partially meets expectations

- 3 = Meets expectations

- 4 = Exceeds expectations

- 5 = Far exceeds expectations

Descriptive-only scales (such as "Poor," "Fair," "Good") or binary Yes/No scales can work, but they limit your ability to differentiate between candidates who are close in overall fit.

Once scores are assigned, there's one more field every scorecard needs — and it often surfaces the most useful hiring conversations.

Interviewer Recommendation Field

Include a final recommendation section (strong yes / yes / neutral / no) that is separate from the numerical scores. This captures the interviewer's overall read after structured scoring is complete. It also flags discrepancies worth discussing in debrief — for example, when a candidate scores high numerically but the interviewer still has reservations about fit.

How to Build an Interview Scorecard Step by Step

A scorecard built after the interview is useless — it must be designed before any candidate speaks to the team, anchored to the job requirements document.

Step 1: Define the Role's Key Success Criteria

Start by listing what top performance looks like in the role within the first 6–12 months, then work backwards to identify the skills, behaviors, and qualifications that predict that performance. Research by Campion et al. (1997) established that basing interview questions and scoring criteria on formal job analysis is a primary component of interview structure, enhancing both reliability and validity.

Avoid copying job descriptions verbatim — translate requirements into assessable traits. For example, instead of "strong communication skills," specify "ability to present technical findings to non-technical executives."

Step 2: Assign Criteria Weights

Not all criteria carry equal importance. Assign weights or priority levels to each criterion so interviewers know which scores matter most when making a final call:

- Critical — must-have for the role; a low score here typically disqualifies

- Important — strong differentiator between candidates

- Nice-to-have — adds value but won't make or break the decision

A 2025 meta-analysis by Wingate found that interviewer assessments of task performance constructs strongly predict actual task performance (ρ = .30), while contextual assessments predict contextual performance (ρ = .28). Aligning criteria with specific job demands consistently outperforms generic evaluations. When two candidates score nearly the same overall but diverge on a critical criterion, weighting gives the hiring team a clear tiebreaker.

Step 3: Choose and Define Your Rating Scale

Define what each point on the scale means in behavioral terms before distributing the scorecard — this is what keeps scores consistent across interviewers. Walk all interviewers through the rubric before the first interview so everyone is working from the same standard.

For example, for "coding proficiency":

- 3 (Meets): Writes clean, functional code with minimal syntax errors

- 4 (Exceeds): Writes optimized code with consideration for scalability

- 5 (Far exceeds): Demonstrates advanced patterns and proactively identifies edge cases

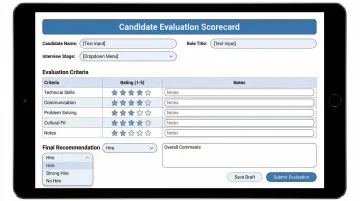

Step 4: Design the Scorecard Document and Assign Interviewers

Build a clean, one-page document with:

- Candidate name

- Role title

- Interview stage

- Interviewer name

- Criteria rows with rating fields

- Notes column for evidence

- Final recommendation field

Assign each interviewer specific criteria areas to probe so there's no overlap and all dimensions are covered. If you're building scorecards at scale, AltHire AI generates structured scorecards directly from the job description — consistent formatting, pre-mapped criteria, ready in under 10 minutes.

Step 5: Collect and Calibrate Independently

Instruct each interviewer to complete their scorecard immediately after the interview and before any group debrief. Once all scorecards are submitted, the hiring team compares scores. When two interviewers rate the same criterion three or more points apart, that gap needs a direct conversation — not averaging. Surface the specific evidence each person cited, then decide whether the discrepancy reflects a genuine split or a rubric misread.

Interview Scorecard Templates for Common Roles

Role-specific templates outperform generic ones. A universal scorecard trades precision for convenience and risks missing the criteria that actually differentiate top performers in a given function.

Technical / Engineering Role Template

Key criteria to include:

- Approaches complex problems systematically, breaking down ambiguity into workable steps

- Considers scalability and maintainability in code quality or system design decisions

- Explains technical solutions clearly to non-engineers without losing accuracy

- Adapts effectively when requirements are unclear or shift mid-project

- Brings a unique perspective or experience that adds to the team's existing strengths

Use a 1–5 scale per criterion with an evidence note field. Include a "would you work with this person" flag as a soft recommendation signal.

According to the Skills Framework for the Information Age (SFIA 8), engineering scorecards should assess systematic, disciplined approaches to development, database design, and systems integration — not just generic problem-solving skills.

Client-Facing / Sales Role Template

Key criteria:

- Articulates value propositions concisely without over-explaining

- Identifies customer pain points by asking targeted, open-ended questions

- Responds to objections with evidence and composure rather than defensiveness

- Demonstrates genuine understanding of client needs, not just surface-level empathy

- Maintains professionalism and focus during high-pressure or difficult conversations

If your process includes a role-play scenario, add a dedicated rating field for it. The O*NET database for Technical and Scientific Sales Representatives confirms that top performers combine product knowledge with interpersonal skills like persuasion and negotiation — both worth scoring explicitly.

Leadership / Management Role Template

Key criteria:

- Connects individual decisions to long-term organizational goals, not just immediate outputs

- Articulates a clear, specific approach to coaching team members and supporting their growth

- Explains how they prioritize and decide with incomplete or conflicting information

- Describes concrete examples of resolving team disagreements, not just principles

- Demonstrates ability to drive alignment across functions without relying on direct authority

Leadership scorecards typically require a longer interview window and multi-round scoring to surface these behaviors reliably. The SHRM Body of Applied Skills and Knowledge (BASK) defines nine core behavioral competencies across Leadership, Interpersonal, and Business clusters, giving hiring teams a structured reference for what strong management candidates should actually demonstrate.

General / Versatile Template (Starter)

For teams building their first scorecard or hiring across varied roles, this minimal structure covers the essentials before you invest in role-specific versions.

Starter structure:

| Field | Description |

|---|---|

| Role | Position title |

| Candidate Name | Full name |

| Interview Stage | Phone screen, technical round, final |

| Interviewer | Your name |

| Criteria (3–5 rows) | Rating + notes for each |

| Overall Score | Aggregate or average |

| Final Recommendation | Strong yes / yes / neutral / no |

This works for early-stage companies or first-time scorecard users who need a quick starting point before building role-specific versions.

Best Practices for Using Interview Scorecards Effectively

Score Independently Before Debriefing

The biggest scorecard mistake is allowing group discussion before individual scores are submitted — one dominant voice can anchor the entire team's ratings. Require each interviewer to finalize and submit their scorecard within 30 minutes of the interview ending.

AltHire AI's platform generates instant reports the moment a candidate completes their interview, so each interviewer can score independently before any group discussion begins.

Audit Scorecards for Pattern Bias Periodically

Even structured processes can reflect systemic bias if the criteria themselves are poorly defined or interviewers are not calibrated. Review scoring patterns across demographic groups every quarter.

AltHire AI's bias-free assessment engine flags inter-rater inconsistencies and highlights where scoring diverges significantly across interviewers — giving hiring teams a clear, objective basis for calibration.

Treat Scorecards as Hiring Documentation

Scorecards are not just decision aids — they are records. Retain interview documentation for compliance purposes, especially in regulated industries like healthcare or finance.

AltHire AI's reporting captures everything needed for a full audit trail:

- Video recordings of each interview session

- Time-stamped transcripts for quick reference

- Proctoring data to verify assessment integrity

According to AltHire AI customer data, organizations using structured scorecards achieved 70% faster time-to-hire and $12M in annual savings per 1,000 hires.

Conclusion

Interview evaluation scorecards only work when used consistently — having them built is the easy part. Start simple with a 5-criteria starter template, calibrate with your team, and iterate toward role-specific versions as hiring patterns mature. The investment in structure delivers faster decisions, reduced bias, and better hires.

If you want to take scorecard consistency further, platforms like AltHire AI embed structured, bias-free scoring directly into the interview process — so evaluations are captured at the moment of assessment, not reconstructed afterward.

Frequently Asked Questions

How are candidates scored in a candidate scoring report?

Candidates are rated on predefined criteria using a numerical scale (typically 1–5), with each criterion scored individually by the interviewer based on observed evidence from the interview. Those individual scores roll up into an overall rating, enabling objective comparison across candidates.

What should be included in an interview scorecard?

A complete interview scorecard should cover:

- Role qualifications and technical skills

- Behavioral and soft skill attributes

- A defined rating scale

- An evidence/notes field for documenting specific examples

- A final interviewer recommendation, kept separate from numerical scores

What is the difference between an interview scorecard and an interview scoring matrix?

The scoring matrix is the overarching framework that defines criteria and rating scales, while the scorecard is the individual document each interviewer fills out to rate a specific candidate against that matrix. In practice, the matrix is built once per role; scorecards are completed once per candidate.

How many criteria should an interview scorecard include?

5–8 criteria is the optimal range — enough to cover key role dimensions without overwhelming the interviewer. Fewer than 5 risks gaps in competency coverage; more than 8 makes scoring inconsistent and harder to complete reliably.

Can interview scorecards help reduce hiring bias?

Scorecards reduce bias by ensuring every candidate is evaluated on the same criteria with the same scale, removing reliance on subjective impressions. However, the criteria themselves must be role-relevant and regularly audited to avoid encoding existing biases into the structured process.

When should interviewers fill out their scorecards?

Scorecards should be completed immediately after the interview, before any group debrief or discussion, to prevent groupthink and anchor bias from influencing individual assessments.