Introduction

Most hiring teams didn't choose AI proctoring — they inherited it. Video interviews became the default during the pandemic, and AI monitoring followed. Today, 90% of employers use video interviews in early hiring stages, up from just 25% pre-pandemic. The remote proctoring market reached $735.17 million in 2025 and is projected to hit $2.39 billion by 2033, reflecting how AI proctoring has moved from optional to expected in high-volume hiring.

Yet most organizations and candidates encounter AI proctoring without understanding what it actually monitors, how it makes decisions, or what separates a legitimate flag from a false alarm. This knowledge gap breeds distrust and drives poor implementation choices. When false positive rates can reach 50%, that's not a rounding error — it's candidates wrongly flagged and hiring decisions built on bad data. This guide breaks down exactly how AI proctoring works, what it can and can't detect, and how to use it without undermining the process it's meant to protect.

TL;DR

- AI proctoring monitors candidates through webcam, microphone, and screen data analyzed by machine learning models

- Detects suspicious behavior—extra faces, gaze shifts, background voices—and flags these for human review

- Used across hiring, education, and certification to verify that assessments reflect genuine candidate performance

- Benefits include scalability and consistency; limitations include false positives and privacy concerns

- For hiring teams, it confirms the actual candidate completed the assessment, reducing impersonation and coaching risks

What Is AI Proctoring?

AI proctoring is a technology-driven supervision system that monitors a candidate's behavior, environment, and identity during a remote session — using machine learning, computer vision, and audio analysis. It replaces or supplements human invigilators when scale makes manual oversight impractical.

As remote interviews and assessments scale to hundreds or thousands of simultaneous sessions, human monitoring becomes logistically impossible. Traditional human-led proctoring operates at a 1:12 proctor-to-candidate ratio for low-stakes exams, and 1:4 for high-stakes scenarios—unsustainable at enterprise volume.

AI proctoring is not a final judge of misconduct. It's a flagging system that surfaces anomalies for human review — not a system that renders verdicts on its own.

According to the European Data Protection Supervisor, hybrid proctoring systems require that "events signalled as suspicious by the automated proctoring system are reviewed by humans." Decisions on violations always rest with a human reviewer.

Two delivery models are in common use:

- Live proctoring — Real-time monitoring with immediate alert capability

- Recorded/post-session proctoring — AI reviews footage after the session and flags timestamped moments

Both use the same core detection mechanisms. The difference is when intervention can happen.

How Does AI Proctoring Work?

AI proctoring follows a defined sequence: pre-session identity verification, active monitoring during the assessment, and post-session alert review.

Initiation and Identity Verification

The session begins with an identity check before the assessment starts. Candidates complete a live photo capture matched against a submitted ID document or stored facial profile. This stage can be:

- Automated — AI matches face and document against the stored profile

- Manual — A human reviewer confirms the match

- Hybrid — Combines both approaches

This is the first gate against impersonation. Systems use Presentation Attack Detection (PAD) to prevent spoofing—detecting when candidates use photos or videos instead of live faces.

Core Monitoring Operation

During the live session, AI continuously processes video and audio feeds, running multiple detection models simultaneously:

- Person detection — Confirms only one individual is present

- Face recognition — Verifies the registered candidate remains visible

- Gaze tracking — Monitors eye and head direction

- Object detection — Identifies unauthorized items (phones, notes)

- Audio classification — Detects secondary voices or unusual sounds

These models don't just capture snapshots. They analyze patterns over time — how often a candidate looks away, not just whether they looked away once — to distinguish normal behavior from suspicious trends.

Accuracy depends heavily on equipment quality. Research on consumer webcam limitations shows that standard webcams operate at low sampling rates (15–100 Hz) with poor spatial sensitivity (roughly 4 degrees of visual angle), making precise eye-tracking error-prone compared to laboratory-grade equipment.

Regulation and Alert Classification

AI proctoring manages signal volume by classifying detected behaviors into severity tiers:

- Clear violation category — Second person visible, phone detected on camera

- Suspicious behavior category — Repeated gaze shifts, background voices requiring human judgment

Without this classification layer, proctors would spend most of their time reviewing routine movements rather than genuine risks. The tiered structure keeps human reviewers focused on events that actually warrant a decision.

Output and Review

The session produces:

- Timestamped activity log

- Flagged video clips

- Alert summary for efficient human audit

The AI organizes evidence. Final verdicts remain with human reviewers. Output quality improves when organizations define behavioral expectations upfront — what counts as suspicious, whether background noise is permitted, and whether reference materials are allowed.

What Does AI Proctoring Actually Monitor?

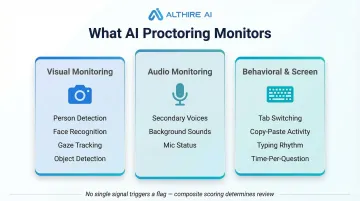

AI proctoring systems work across three monitoring layers—visual, audio, and behavioral. Each captures different risk signals, and most platforms combine them into a composite integrity score rather than acting on any single flag.

Visual Monitoring

- Person detection — Flags if more than one individual appears in frame

- Face recognition — Confirms the registered candidate remains visible throughout

- Gaze tracking — Monitors eye and head direction to identify sustained attention away from screen

- Object detection — Identifies unauthorized items (phones, notes) within camera range

NIST's Face Recognition Vendor Test (FRVT) found that false positive rates in facial recognition algorithms vary by factors of 10 to beyond 100 times across different demographics—a critical consideration for fairness.

Audio Monitoring

The microphone feed is analyzed for:

- Secondary voices suggesting external assistance

- Unusual background sounds indicating non-isolated environments

- Confirmation that the microphone remains active throughout

Behavioral and Screen Monitoring

Beyond camera and audio, systems track:

- Tab switching — Indicates potential research or external resource access

- Copy-paste activity — Suggests answers sourced from elsewhere

- Typing rhythm anomalies — Deviations from normal patterns

- Time-per-question patterns — Unusually fast or slow responses

No single signal typically triggers a flag on its own. According to research on keystroke dynamics and response timing, these behavioral patterns are most reliable when evaluated together as part of a composite risk assessment.

Benefits and Limitations of AI Proctoring

Core Operational Benefits

- Scalability — Monitors thousands of simultaneous sessions at consistent quality

- Cost reduction — Reduces human invigilator expense and scheduling burden

- Consistency — Applies the same evaluation criteria to every candidate, removing bias from the review process

Primary Limitations

False positives remain a real challenge. Independent research shows AI detection tools can exhibit false positive rates as high as 50%. A candidate looking off-screen to think can register as suspicious. However, hybrid approaches combining AI detection with human review reduce false positive impact by 85%, bringing rates down to 3-5%.

Privacy concerns around biometric data and video storage must be managed under regulations like GDPR. The Illinois Biometric Information Privacy Act (BIPA) has triggered lawsuits against platforms like HireVue for allegedly collecting facial and voice data without proper consent.

Regulatory action goes beyond lawsuits. The FTC banned Rite Aid from using facial recognition for five years after false positives disproportionately impacted people of color — a precedent that directly applies to proctoring platforms.

Equipment and environment matter. Poor webcams, inconsistent lighting, or unstable internet can trigger false alerts with no connection to actual misconduct — a frustrating outcome for candidates and reviewers alike.

AI proctoring works best as part of a layered system: automated monitoring sets the baseline, but clear session rules, candidate guidance, and mandatory human review before any action determine whether the system is fair in practice.

Where Is AI Proctoring Used?

Primary Use Contexts

- Remote hiring interviews and skills assessments

- Academic and university examinations

- Professional certification exams

- Large-scale recruitment screening

In each case, the goal is the same: confirm the person being evaluated is who they claim to be, and that they're working without unauthorized assistance. Adoption is accelerating fast — by 2030, AI recruitment adoption is projected to reach 95% in IT, 90% in Healthcare, and 80% in Finance.

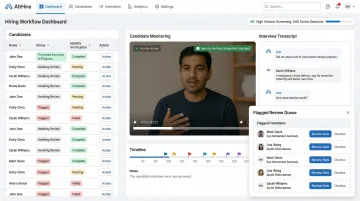

What Organizations Should Look For

When selecting an AI proctoring solution for hiring:

- Uses multi-factor authentication with spoof detection for identity verification

- Classifies alerts by severity with clear human review workflows

- Complies with GDPR, CCPA, and biometric data regulations

- Allows configurable flagging thresholds based on interview format

Platforms like AltHire AI embed 100% AI proctored interviews directly into the hiring workflow, covering identity verification, behavioral monitoring, and flagged review. Hiring teams get a single system rather than stitching proctoring onto separate tools — especially useful for high-volume screening, where AltHire AI delivers 350+ weekly interviews with comprehensive proctoring built in.

Frequently Asked Questions

How does AI proctoring work?

AI proctoring uses webcam, microphone, and screen data analyzed by machine learning models to detect suspicious behavior in real time, classify alerts by severity, and surface flagged moments for human review. It does not make autonomous decisions about violations.

Can AI detect cheaters?

AI can detect behavioral signals associated with cheating—such as secondary faces, unauthorized objects, or unusual gaze patterns—but it cannot confirm cheating with certainty. A human reviewer must interpret each flag in context before any action is taken.

Can a proctor see my screen?

Depending on platform configuration, screen monitoring may be active, capturing open applications, tab switches, or screen content. Candidates are typically informed prior to the session, and screen access is governed by the organization's privacy and consent policies.

Should I opt out of AI screening?

Opting out is not usually available once you've agreed to a proctored assessment format, as it's typically a requirement of the process. If you have concerns about specific aspects, contact the hiring organization to understand what is monitored and why.

How do I prove I didn't use AI?

AI proctoring captures behavioral patterns throughout the session: typing rhythm, response timing, gaze patterns, and screen activity. Organizations can review the full session log alongside your responses to understand any unusual patterns in context.