Introduction

Hiring managers conduct strong interviews but consistently fail at the documentation stage. Reports come back too vague, too inconsistent, or completed too late to influence decisions. According to research by Huffcutt et al., unstructured interviews yield inter-rater reliabilities of just 0.37 to 0.40 — meaning two interviewers evaluating the same candidate will likely walk away with entirely different conclusions.

That inconsistency is exactly what a well-structured assessment report is designed to fix. With 74% of employers admitting to hiring the wrong person and bad hires costing up to $240,000, the format, content, and timing of your report directly affect hire quality. This guide covers what to include, how to structure it, and what separates reports that drive decisions from ones that gather dust.

TL;DR

- A candidate assessment report documents interview observations, competency scores, and hiring recommendations in a structured format

- Strong reports include candidate metadata, evaluation criteria, scored ratings backed by behavioral evidence, and a clear hiring recommendation

- Consistent reports require predefined criteria, standardized rubrics, and submission within 24 hours of the interview

- Common mistakes include vague commentary, skipping evidence-based scoring, and letting bias shape ratings

- AI-powered platforms generate structured reports right after each interview, removing manual effort and scoring inconsistency

What Is a Candidate Assessment Report (and Why Does It Matter)?

Defining the Assessment Report

A candidate assessment report is a structured document capturing how a candidate performed across predefined competencies, skills, and behavioral indicators during an interview or evaluation process. Unlike informal notes or post-call impressions, these reports follow a consistent format — making evaluations comparable across candidates, traceable for compliance, and defensible if hiring decisions are ever questioned.

The Business Case for Documentation

Hiring decisions made without documented assessments are harder to defend, replicate, or learn from. The financial stakes are real: the U.S. Department of Labor estimates that bad hires cost up to 30% of first-year salary. SHRM research puts the total even higher — up to $240,000 when you factor in recruitment, compensation, training, and lost productivity.

When Assessment Reports Matter Most

Those costs make structured documentation a business necessity, not a formality. Assessment reports are especially critical in:

- High-volume hiring where multiple interviewers evaluate the same candidates

- Roles requiring compliance documentation to meet EEOC or OFCCP recordkeeping requirements

- Panel calibration processes where independent assessments must be compared before final decisions

- Sequential interview stages where candidate evaluations need consistency across different interviewers

What to Include in a Detailed Assessment Report

Candidate and Role Information

Every report must include basic metadata:

- Candidate name

- Role applied for

- Interview date and time

- Interviewer name(s)

- Stage of the hiring process

This metadata ensures the report is traceable and usable across teams and ATS platforms.

Competency or Evaluation Framework

List the specific skills, behaviors, or traits being assessed:

- Problem-solving ability

- Communication skills

- Technical proficiency

- Culture fit indicators

Define these competencies before the interview — without pre-set criteria, each interviewer assesses differently and reports become impossible to compare across candidates.

Once your framework is set, every rating in the report ties back to it.

Scored Ratings Per Criterion

Each competency should have a numerical or categorical rating, paired with a brief explanation of why that score was assigned:

- Numerical scales (1–5 or 0–10) work well for comparing candidates across a pipeline

- Categorical ratings (Exceeds / Meets / Below Expectations) are easier for non-technical hiring managers to interpret

- Either format requires a supporting note — a score without evidence is just a number

AltHire AI's platform uses a 0–10 numeric scale with dimensional performance scores across customizable criteria, tying each rating directly to candidate responses rather than interviewer impressions.

Behavioral Evidence and Verbatim Notes

For each criterion, include specific examples drawn directly from the candidate's responses:

- What they said

- How they framed it

- What it reveals about their capability

Vague impressions — "seemed confident," "good energy" — aren't defensible in a structured review process. Cite what the candidate actually said or did, and let the evidence carry the evaluation.

Summary and Hiring Recommendation

Close the report with a concise paragraph synthesizing:

- Overall performance

- Standout strengths

- Key concerns

- Clear recommendation (advance, hold, or reject)

The summary should give any hiring manager enough context to understand the decision and act on it — without needing to read every scored criterion first.

How to Create a Detailed Assessment Report: Step by Step

Step 1: Define Evaluation Criteria Before the Interview

Align on specific competencies and behaviors relevant to the role before asking a single question. Without pre-set criteria, every interviewer will assess differently and reports will be incomparable.

What to establish:

- Specific competencies relevant to the role

- Scoring scale and rating definitions

- Weight of each competency

- Behavioral indicators for each level

This framework becomes the backbone of every report generated from this process.

Step 2: Use a Standardized Report Template

Create or adopt a consistent template that includes all required fields so nothing is left to individual preference or memory.

Template requirements:

- Candidate information section

- Evaluation criteria with scoring fields

- Evidence documentation areas

- Summary and recommendation sections

Ensure the template is role-appropriate. A technical interview report for an engineer will look different from a behavioral interview report for a customer success hire.

Step 3: Document Observations During or Immediately After the Interview

Record specific candidate responses as close to real time as possible. Research on the Ebbinghaus forgetting curve demonstrates that memory retention drops sharply within the first 24 hours.

Documentation best practices:

- Take brief, objective notes during the interview

- Expand notes immediately afterward

- Note both what was said and how it maps to evaluation criteria

- Don't batch notes at the end of the week

Step 4: Apply Scores Using the Rubric — Not Your Gut

Rate each competency independently using the pre-defined rubric before writing the overall summary. This prevents the halo effect, where one strong area inflates all other scores.

Scoring discipline:

- Evaluate each criterion separately

- Reference your rubric definitions

- Attach evidence to every score

- Complete scoring before reading others' assessments

For teams using structured AI-powered interviews, platforms like AltHire AI auto-generate scored, criterion-by-criterion reports immediately after each session. This removes scorer delay and enforces rubric consistency across every candidate. Each report includes dimensional performance scores, question-by-question evaluation, and AI flags for inconsistencies.

Step 5: Write the Summary and Recommendation

Once scoring is complete, synthesize the results into a summary paragraph highlighting:

- Top two or three strengths

- Any meaningful gaps

- How the candidate compares to role requirements

- Clear, explicit recommendation with reasoning

Ambiguous recommendations like "could be a fit with more information" delay decisions and waste calibration time.

Key Factors That Affect the Quality of Your Assessment Reports

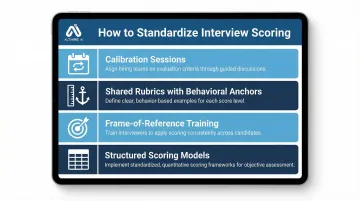

Scoring Consistency Across Interviewers

If two interviewers assess the same competency with different mental benchmarks, their reports are incomparable. Research shows that panel interviews yield inter-rater reliability of 0.74, but reliability plummets to 0.44 when candidates are evaluated by separate interviewers in sequential stages. That gap compounds quickly across a hiring pipeline with multiple rounds.

Solutions for consistency:

- Calibration sessions before interviews begin

- Shared rubrics with behavioral anchors

- Frame-of-reference training to align interviewer schemas

- Structured scoring models enforced across all interviewers

Studies by Melchers et al. demonstrate that behaviorally anchored rating scales (BARS) and frame-of-reference training substantially improve both rating accuracy and inter-rater reliability.

Specificity of Behavioral Evidence

Better evidence produces better reports. Vague phrases like "seemed confident" or "good energy" aren't defensible — and they're impossible to compare across candidates during calibration.

What specificity looks like:

- ❌ "Good communicator"

- ✅ "Explained technical architecture using clear analogies, adjusted explanation when I asked clarifying questions, and summarized key points at the end"

Reports should cite what the candidate actually said or did, not impressions.

Timeliness

Recall accuracy drops significantly within hours of an interview. Teams should establish a norm for how quickly reports must be completed after a session ends.

Recommended timelines:

- Within 2 hours: Ideal for maximum accuracy

- Within 24 hours: Minimum acceptable standard

- Beyond 24 hours: Significant memory degradation

Interview automation platforms like AltHire AI sidestep this problem by generating structured reports the moment an interview ends — no recall required.

Bias Controls Built Into the Process

Structured report formats reduce affinity bias, recency bias, and confirmation bias — three of the most common ways gut feeling overrides evidence.

Effective bias controls:

- Require evidence per criterion

- Prohibit free-form general impressions as primary input

- Enforce rubric-based scoring before group discussion

- Separate individual report completion from debrief discussions

Research by Huffcutt & Roth shows that high-structure interviews have lower racial group differences on average than low-structure interviews. Standardization, in other words, doesn't just improve consistency — it actively reduces the demographic disparities that unstructured processes tend to amplify.

Common Mistakes to Avoid When Creating Assessment Reports

Writing Impressions Instead of Evidence

Phrases like "great cultural fit" or "wasn't a strong communicator" with no supporting examples are the most common report failure. They communicate nothing transferable and can expose organizations to bias claims.

The fix: For every rating, include at least one specific example of what the candidate said or did that supports that score.

Completing the Report Days After the Interview

Delayed documentation is one of the most reliable ways to degrade report quality. Details blur, strong moments get forgotten. The final rating ends up reflecting how the interviewer felt walking out — not what the candidate actually demonstrated.

The fix: Establish and enforce a 24-hour documentation SLA. Platforms that auto-generate reports from structured interview data eliminate the delay entirely.

Using a Different Format for Every Role or Interviewer

Without a standardized template, reports cannot be compared, calibrated, or used to improve the interview process over time. When formats vary by interviewer, hiring decisions inherit that inconsistency — and it shows in outcomes.

What works better: Create role-specific templates that maintain consistent structure while adapting evaluation criteria to each position's requirements.

Frequently Asked Questions

What is a detailed assessment?

In hiring, a detailed assessment is a criterion-by-criterion evaluation of a candidate conducted during or after an interview process. Each competency is documented with specific behavioral evidence and a scored rating, creating a structured, defensible record of the decision.

What is included in an assessment report?

An assessment report includes candidate and role metadata, evaluation criteria with scored ratings for each competency, behavioral evidence supporting each score, complete interview documentation (notes, transcripts, or recordings), and a summary with a clear hiring recommendation and rationale.

How long should a candidate assessment report be?

Length depends on role complexity and process stage, but most structured reports are 1-2 pages. Completeness matters more than length: every criterion should have a score and at least one evidence-backed comment.

Who should write a candidate assessment report?

Every interviewer who participated in the evaluation should complete their own report independently before any debrief discussion. This prevents groupthink and ensures the calibration meeting reflects individual, uninfluenced assessments rather than consensus formed prematurely.

How do you make assessment reports unbiased?

Bias is reduced by defining criteria before interviews begin, scoring against behavioral anchors, requiring specific evidence for every rating, and completing reports independently before group discussion. Structured formats prevent gut-feel impressions from overriding objective scores.

Can assessment reports be automated?

Yes. AI-powered platforms generate structured reports automatically from recorded sessions, scoring responses against criteria and flagging patterns without manual effort. AltHire AI, for example, produces reports instantly upon interview completion, covering dimensional scores, time-stamped transcripts, and proctoring data, reducing documentation time and scorer inconsistency.